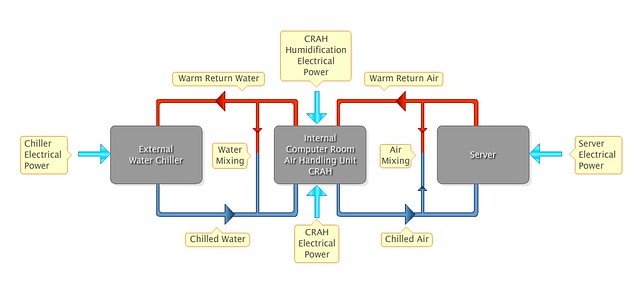

Facebook announced at the end of last week new way to report PUE and WUE for its datacenters.

This comes hot on the heels of ebay’s announcement of its Digital Service Efficiency dashboard – a single-screen reporting the cost, performance and environmental impact of customer buy and sell transactions on ebay.

These dashboards are a big step forward in terms of making data centers more transparent about the resources they are consuming. And about the efficiency, or otherwise, of the data centers.

Even better, both organisations are going about making their dashboards a standard, thus making their data centers cross comparable with other organisations using the same dashboard.

There are a number of important differences between the two dashboards, however.

To start with, Facebook’s data is in near-realtime (updated every minute, with a 2.5 hour delay in the data), whereas ebay’s data is updated every quarter of a year. So, ebay’s data is nowhere near realtime.

Facebook also includes environmental data (external temperature and humidity), as well as options to review the PUE, WUE, humidity and temperature data for the last 7 days, the last 30 days, the last 90 days and the last year.

On the other hand, ebay’s dashboard is, perhaps unsurprisingly, more business focussed giving metrics like revenue per user ($54), the number of transactions per kWh (45,914), the number of active users (112.3 million), etc. Facebook makes no mention anywhere of its revenue data, user data nor its transactions per kWh.

ebay pulls ahead on the environmental front because it reports its Carbon Usage Effeftiveness (CUE) in its dashboard, whereas Facebook completely ignores this vital metric. As we’ve said here before, CUE is a far better metric for measuring how green your data center is.

Facebook does get some points for reporting its carbon footprint elsewhere, but not for these data centers. This was obviously decided at some point in the design of its dashboards, and one has to wonder why.

The last big difference between the two is in how they are trying to get their dashboards more widely used. Facebook say they will submit the code for theirs to the Opencompute repository on Github. ebay, on the other hand, launched theirs at the Green Grid Forum 2013 in Santa Clara. They also published a PDF solution paper, which is a handy backgrounder, but nothing like the equivalent of dropping your code into Github.

The two companies could learn a lot from each other on how to improve their current dashboard implementations, but more importantly, so could the rest of the industry.

What are IBM, SAP, Amazon, and the other cloud providers doing to provide these kinds of dashboards for their users? GreenQloud has had this for their users for ages, now Facebook and ebay have zoomed past them too. When Facebook contributes oits codebase to Github, then the cloud companies will have one less excuse.

Image credit nicadlr