Equinix is powering one of its Silicon Valley data centers with a 1MW Bloom Energy fuel cell

As we have pointed out here many times, the main cloud providers (particularly Amazon and IBM) are doing a very poor job either powering their data centers with renewable energy, or reporting on the emissions associated with their cloud computing infrastructure.

Given the significantly increasing use of cloud computing by larger organisations, and the growing economic costs of climate change, the sources of the electricity used by these power-hungry data centers is now more relevant than ever.

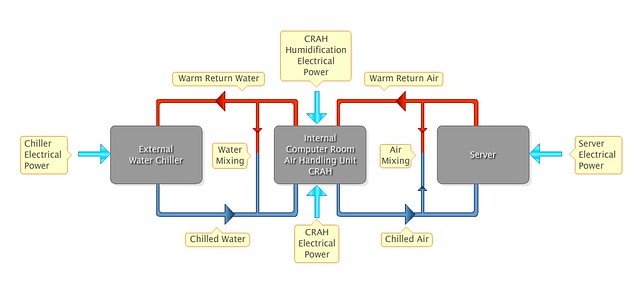

Against this background, it is impressive to see to see Equinix, a global provider of carrier-neutral data centers (with a fleet of over 100 data centers) and internet exchanges, announce a 1MW Bloom Energy biogas fuel cell project at its SV5 data center, in Silicon Valley. Biogas is methane gas captured from decomposing organic matter such as that from landfills or animal waste.

Why would Equinix do this?

Well, the first phase of California’s cap and trade program for CO2 emissions commenced in January 2013, and this could, in time lead to increased costs for electricity. Indeed in their 2014 SEC filing [PDF], Equinix note that:

The effect on the price we pay for electricity cannot yet be determined, but the increase could exceed 5% of our costs of electricity at our California locations. In 2015, a second phase of the program will begin, imposing allowance obligations upon suppliers of most forms of fossil fuels, which will increase the costs of our petroleum fuels used for transportation and emergency generators.

We do not anticipate that the climate change-related laws and regulations will force us to modify our operations to limit the emissions of GHG. We could, however, be directly subject to taxes, fees or costs, or could indirectly be required to reimburse electricity providers for such costs representing the GHG attributable to our electricity or fossil fuel consumption. These cost increases could materially increase our costs of operation or limit the availability of electricity or emergency generator fuels.

In light of this, self-generation using fuel cells looks very attractive, both from the point of view of energy cost stability, and reduced exposure to increasing carbon related costs.

On the other hand, according to today’s announcement, Equinix already gets approximately 30% of its electricity from renewable sources, and it plans to increase this to 100% “over time”.

Even better than that, Equinix is 100% renewably powered in Europe despite its growth. So Equinix is walking the walk in Europe, at least, and has a stated aim to go all the way to 100% renewable power.

What more could Equinix do?

Well, two things come to mind immediately:

- Set an actual hard target date for the 100% from renewables and

- Start reporting all emissions to the CDP (and the SEC)

Given how important a player Equinix in the global internet infrastructure, the sooner we see them hit their 100% target, the better for all.